We study human cognition, action and interaction on scales ranging from individual experience, through interactions between individuals, to the languages, cultures and dynamics of societies.

Research Methods

- Empirical experimentation

- Computational modelling

- Philosophical enquiry

Including: Conversation Analysis, Discourse Analysis, Robotics, Cognitive Modelling, Machine learning, Formal Modelling, Computational Linguistics, Empirical studies of brain and behaviour.

News

CogSci researchers awarded at world-leading ACM Computer-Human Interaction Conference

When: July 2021

Led by PhD students and lead authors Jack Ratcliffe and Francesco Soave, a group of researchers from CogSci have been awarded an Honourable Mention for their paper at the 2021 ACM CHI Conference on Human Factors in Computing Systems, a world-leading human computer interaction conference. Only 5% of the 5,844 submissions received were presented with this prestigious award. In the award-winning paper, the authors surveyed 46 XR researchers from both academia and industry to understand the research community’s current practice around XR studies, their experience with remote XR studies, and the potentials and drawbacks of using a remote approach. The research found that remote XR research has the potential to be a useful research approach, although currently suffers from numerous limitations regarding data collection, system development and a lack of clarity around participant recruitment. The full paper can be found at ACM Digital Library:

Ratcliffe J, Soave F, Bryan-Kinns N, Tokarchuk L, Farkhatdinov I. Extended Reality (XR) Remote Research: a Survey of Drawbacks and Opportunities. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems 2021 May 6 (pp. 1-13).

Take part in our SemEval 2020 task

When: February 2020

As part of the EMBEDDIA project, our SemEval 2020 Task 3 – Predicting the (Graded) Effect of Context in Word Similarity, is now open for submissions. The task enables us to build systems that can predict the effect that context has on human perception of similarity of words, and test these predictions on our new datasets covering four different languages (English, Croatian, Slovene and Finnish). Participants can submit their results online until the 12th of March.

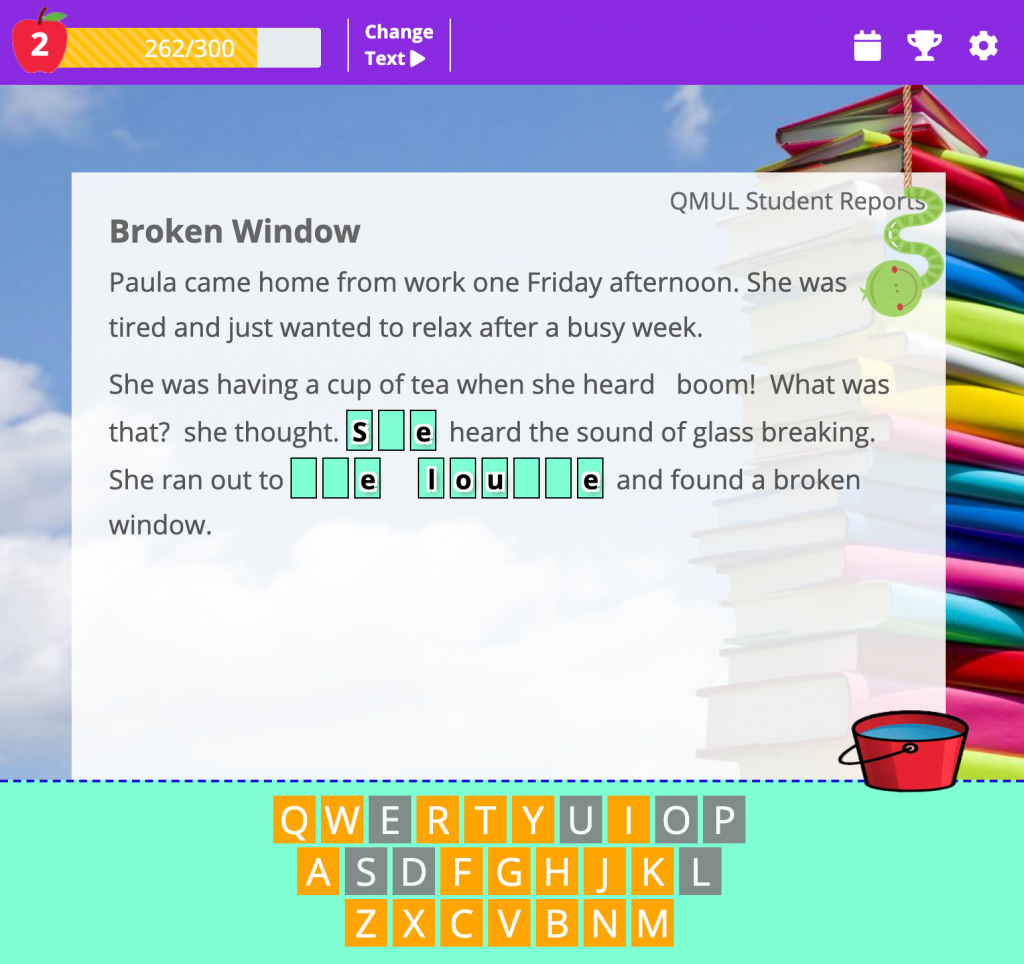

Wormingo: a game-with-a-purpose for NLP research

When: February 2020

Developed by the DALI project, Wormingo is a game-with-a-purpuse in which players contribute to science by annotating texts for NLP, while also improving their skill in English through language learning-oriented puzzles.

Developed by the DALI project, Wormingo is a game-with-a-purpuse in which players contribute to science by annotating texts for NLP, while also improving their skill in English through language learning-oriented puzzles.

The game works both on mobile and desktop! You can access it via wormingo.com.

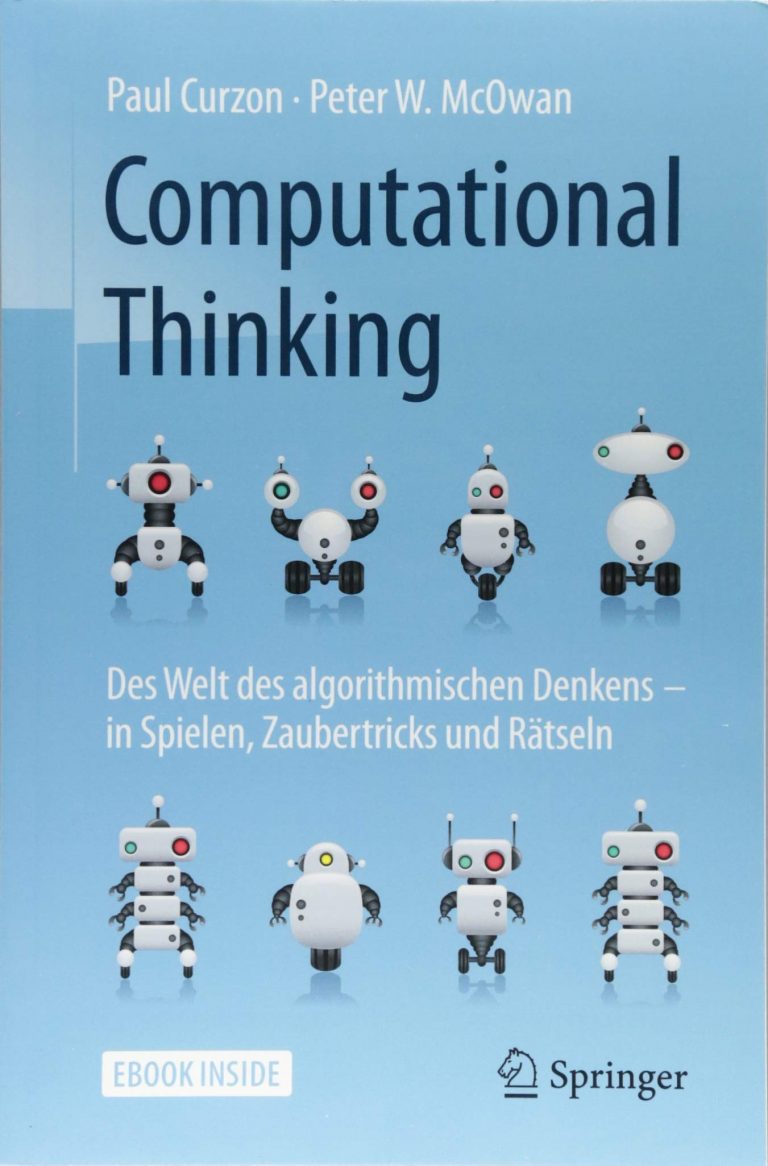

Congratulations to Prof Paul Curzon, recipient of the Booth Education Award for CS education

When: January 2020

Paul Curzon, professor of computer science in the School of Electronic Engineering and Computer Science at Queen Mary University of London has received the 2020 IEEE Computer Society Taylor L. Booth Education Award for outstanding contributions to the rebirth of computer science as a school subject.

Paul Curzon, professor of computer science in the School of Electronic Engineering and Computer Science at Queen Mary University of London has received the 2020 IEEE Computer Society Taylor L. Booth Education Award for outstanding contributions to the rebirth of computer science as a school subject.

Paul’s 15-year long project, cs4fn, short for “Computer science for fun”, created with Peter McOwan, is an enormous collection of ideas, insights, and resources that demonstrate and showcase the clever ideas from interdisciplinary computer science research, aimed primarily at school-age children.

Simon Peyton Jones of Microsoft Research said “Paul has gone far beyond simply being an effective educator in his subject: he has made a qualitatively new contribution to his nation.”

Capita and Dragonfly AI partner to grow predictive visual analytics platform

When: August 2019

Capita has entered a strategic ‘Scaling Partner’ relationship with Dragonfly Technology Solutions Ltd, an exciting early stage business disrupting the way companies create and optimise content. Capita will provide business development services to Dragonfly and becomes a significant shareholder in the business.

Capita has entered a strategic ‘Scaling Partner’ relationship with Dragonfly Technology Solutions Ltd, an exciting early stage business disrupting the way companies create and optimise content. Capita will provide business development services to Dragonfly and becomes a significant shareholder in the business.

Dragonfly AI gives marketers the power to predict, analyse and influence human attention in a world where people are constantly overloaded with information. This predictive visual analytics platform instantly shows what the human brain sees first, enabling organisations and brands to optimise the performance of their content whether moving or static, digital or physical.

Originally developed by the Cognitive Sciences Research Group at Queen Mary University of London for over a decade and independently verified by the Massachusetts Institute of Technology (MIT), this is market-defining technology used by household names like GSK, Hachette, McDonalds and NBC Universal.

Dragonfly AI builds on Capita’s position as a leader in customer experience and will help Capita and its clients win the attention of audiences, maximise the value of customer interactions and enable data-driven design decisions that improve customer satisfaction.

Jon Lewis, Capita chief executive said: “Our partnership with Dragonfly AI demonstrates Capita’s dedication to being at the cutting edge of technology and will create UK-based digital jobs while delivering strategic value for Capita, our clients and Dragonfly AI.”

David Mitchell, Co-Founder, Dragonfly AI said: “Working with Capita Scaling Partner is a significant milestone for us and opens the door to a raft of opportunities. The deal gives us a huge amount of access to professional expertise and resources, that will help us drive the adoption of Dragonfly AI across multiple sectors and help us expand rapidly.”

Capita’s scaling partnership with Dragonfly AI brings highly experienced professionals with skills across sales, marketing, strategy, commercial negotiations, investment, business architecture, IT and project management to the table. This will enable Dragonfly AI to think and act like a big company at an early stage in their development and ensure that they continue delivery excellence as they scale up.

To learn more about Dragonfly AI please visit the website https://dragonflyai.co/

New Honorary Professor: Steve Clark

When: May 2019

We are delighted to announce that Stephen Clark has joined the Cognitive Science group as an Honorary Professor. Steve is a distinguished expert in natural language processing, computational linguistics and machine learning. He received his PhD in Computer Science and AI from Sussex and worked as PDRA in Edinburgh before moving to a faculty position in Computer Science at Oxford and then more recently at the Computer Lab in Cambridge. He is now a full-time research scientist at Deepmind where one of his key interests is the acquisition of language by artificial agents in the context of realistic virtual environments.

We are delighted to announce that Stephen Clark has joined the Cognitive Science group as an Honorary Professor. Steve is a distinguished expert in natural language processing, computational linguistics and machine learning. He received his PhD in Computer Science and AI from Sussex and worked as PDRA in Edinburgh before moving to a faculty position in Computer Science at Oxford and then more recently at the Computer Lab in Cambridge. He is now a full-time research scientist at Deepmind where one of his key interests is the acquisition of language by artificial agents in the context of realistic virtual environments.

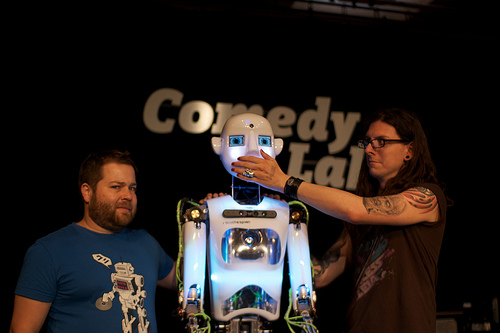

Comedy Lab – undiscovered footage

When: May 2019

On Tour, Unannounced is a robot stand-up, to unsuspecting audiences, at established comedy nights. The footage from the acts is publicly available for the first time.

More info on Toby Harris’ website.

The Power of Computational Thinking is now translated to German

When: October 2018

Last year Paul Curzon and Peter McOwan published “The Power of Computational Thinking: Games, Magic and Puzzles to Help You Become a Computational Thinker“. Following the Chinese translation earlier this year, the book was recently translated to German by Bernhard Gerl and published by Springer-Verlag Berlin Heidelberg.

Teaching Computing Concepts

When: April 2018

EECS academics in partnership with colleagues in Learning Development have written a chapter for a new Bloomsbury publication – “Computer Science Education: Perspectives on Teaching and Learning in School” edited by Sue Sentance at King’s College London.

“Teaching Computing Concepts” by Paul Curzon, Peter McOwan, James Donohue, Seymour Wright and William Marsh (all Queen Mary University of London) draws on their experience both of teaching undergraduate students at QMUL as well as CPD support (through courses, workshops and resources) for computer science teachers and their public engagement work. Their teaching methods include the use of ‘unplugged’ activities, such as magic tricks, which introduce computing concepts in a fun, inspiring and accessible way.

Curzon, McOwan and Marsh from QMUL work closely with Sentance (KCL) as part of CAS London Regional Centre, a university-led, Government-funded project to support computing teachers through the Computing At School (CAS) network. The EECS-based CS4FN (Computer Science For Fun) magazine and website have been supporting computing students (and teachers) for over 10 years and Teaching London Computing is its sister portal providing resources for teachers.

Chinese translation of the Power of Computational Thinking

When: April 2018

In 2017 Paul Curzon and Peter McOwan published “The Power of Computational Thinking: Games, Magic and Puzzles to Help You Become a Computational Thinker” based on material, ideas and approach from CS4FN. This has now been translated into Chinese by a Taiwanese publisher as a result of the strong following of CS4FN in Taiwan.

Embarrassed Robots

When: April 2018

Artificial intelligence and robotics will play an increasingly important role in supporting our lives. Currently, AI and robotics remain crude – confined to our smartphones, sat-navs, or tasked to build our cars. To fit more seamlessly into our daily lives they will have to learn to successfully reflect our emotions – the embarrassment for example. Embarrassed Robots project proposes an investigation into how through material, form and function a robot can come to express emotion, without aping and alienating us.

Embarrassment is a quintessential human emotion and something that in the future any robot occupying a human facing role will need to be able to replicate. This project proposes an investigation into how the design of a robot can come to express embarrassment.

–

This speculative design project is currently developing by Soomi Park at the Design Museum as one of Designer in Residence projects of this year.

More info on the project website.

UnsocialVR: The project that lets you fake listening in social VR

When: January 2018

UnsocialVR, Tom Gurion’s advanced project placement, checks what it takes to fake listening in virtual environment. By pressing a button you can let a set of automating behaviours take control over your avatar, and move away to do something else.

TileAttack!

When: December 2017

TileAttack! is a game-with-a-purpose (GWAP) designed to gather annotations for text segmentation. The current application is labelling mentions for anaphoric corpora.

Chris Wood receives Best paper award at DIS

When: June 2017

Chris Wood, a Media and Arts Technology PhD Student here at Queen Mary, recently received a Best paper award at Designing Interactive Systems (DIS). The conference was held from 10-14 June in Edinburgh, UK. There were 5 winners in total, which were the top 1% of submissions.

Soomi Park selected for the Design Museum’s Designers in Residence programme!

When: May 2017

Soomi Park, a London-based designer and Media and Arts Technology PhD Student here at Queen Mary has been selected as one of four designers for the Design Museum’s Designers in Residence programme. Her ‘Embarassed Robots’ project will look at how robots can potentially reflect complex human emotions and what this could mean for the future of artificial intelligence. Read more about it here!

Steve Reich’s Clapping Music wins Public Engagement award

When: February 2017

On Tuesday 7th February 2017 Steve Reich’s Clapping Music won the Involvement award at the QMUL Engagement and Entrepreneurship Awards, in the category of Public Engagement. Steve Reich’s Clapping Music is a free iPhone/iPad app designed to engage audiences with a new music genre, using a novel interactive game-based approach. Players learn to play an iconic minimalist composition, Clapping Music by Steve Reich. Information on the research is available here.

Scholarship schemes available!

Information is available on various scholarship schemes at Queen Mary, open to PhD applicants from a variety of countries. Click here for more details.

Call for Papers: SysMus17 at Queen Mary

When: Wednesday 13th – Friday 15th September 2017

Where: Queen Mary, University of London

As SysMus enters its 10th year, come and celebrate with us in London! SysMus17 is coming September 13th-15th 2017 and will be hosted by the Music Cognition Lab at Queen Mary.

Invitations are open for presentations in the form of live spoken papers, virtual spoken papers and poster presentations. The deadline for submitting is June 30th, 2017. Visit the site for more details and read the call for papers here!

Get your conversation analysed at New Scientist Live

When: Thursday 22nd – Sunday 25th September 2016

Where: ExCeL London

Language and interaction scientists from around the UK will gather at New Scientist Live in London’s Excel Centre to put visitors’ interpersonal interaction under the microscope by analysing conversations between visitors as they happen.

Conversation analysts usually take months or even years to record, transcribe, analyse and write-up their results, but for New Scientist Live, a team from Queen Mary’s Cognitive Science group and Loughborough University’s Department of Social Sciences has developed a new demo format they are calling the ‘Conversational Rollercoaster’ that will speed up the process. While visitors join in spontaneous discussion hosted by the pop-up talk-show ‘Talkaoke’, the analysts will be hard at work recording, studying and presenting research findings about visitors’ conversations as they talk.

“The idea is to reveal the amazing ways people use all the time to manage the unpredictability of their everyday interactions – so they can join in and talk, then step off the ‘conversational rollercoaster’ and see a snapshot of the amazingly intricate thing they just did effortlessly – without even thinking about it – in a new light”: Elizabeth Stokoe, Professor of Social Interaction at Loughborough University.

“It’s like a mix between a live reality talk-show and football commentary, where you have all this spontaneous action in the conversation, then immediate expert analysis – except hopefully with fewer sports clichés”: Saul Albert, main organizer from Queen Mary’s Cognitive Science research group.

CogSci computational linguists Karolina Sylwester & Matthew Purver discover that Democrats and Republicans differ in psychologically-laden language

… including words for swearing, religion and uncertainty!

Research Article Published in PLOS One on 16 September, 2015

The researchers sampled tweets from followers of major Democrat and Republican accounts, and found that party affiliation had some interesting correlations with the language people use. As well as differences in propensity to swear or mention religion, they found differences in emotional content, levels of uncertainty and pronoun use, with liberals more likely to refer to themselves and conservatives to refer to group identity.

Here’s what The Guardian, the Daily Mail, the Scientific American and others have to say!

Dr. Karen Shoop, CogSci Lecturer in Digital Media, on BBC Women’s Hour

When: Monday 26 January 2015

What do knitting and coding have in common? Dr. Karen Shoop tells us about it, during this podcast, at minute 11:27.

CogSci PhD candidate Louis McCallum’s drumming robot Mortimer takes centre stage in the Royal Institution Christmas Lectures

When: Tuesday 23 December 2014

The 2014 Christmas Lectures: Sparks will fly: How to Hack Your Home, by Professor Danielle George, Dec 23

In the 3rd lecture, which was broadcast on BBC 4 on the 31st of December, Professor George showed how motors can be used to create a robot orchestra, with Mortimer on drums. Play an intro video here.

The 11th International Conference on Computational Semantics (IWCS 2015)

IWCS 2015 will be held at Queen Mary University of London, UK on 14-17th April 2015. IWCS is the bi-yearly meeting of SIGSEM, the ACL special interest group on semantics.

The aim of the IWCS conference is to bring together researchers interested in any aspects of the computation, annotation, extraction, and representation of meaning in natural language, whether this is from a lexical or structural semantic perspective. IWCS embraces both symbolic and statistical approaches to computational semantics, and everything in between.

16th December 2014 - Paper submissions due (long and short) 2nd February 2015 - Paper notification of acceptance 25th Feburary 2015 - Camera-ready papers due 1st March 2015 - Full workshop material due 14th April 2015 - Workshops 15-17 April 2015 - Main conference

New Publication, by PhD Candidate Howard Williams & Prof. Peter McOwan

@Wired: Magic Tricks Created by Artificial Intelligence

Computer scientists at QMUL have taught a computer how to create magic tricks using artificial intelligence. Researchers gave a computer program the outline of how a magic jigsaw puzzle and a mind reading card trick work, as well the results of experiments into how humans understand magic tricks, and the system created completely new variants on those tricks which can be delivered by a magician.

See also: Daily Mail, International Business Times, The Times, The Mirror, Times of India, Yahoo News, Innovations Report,Engineering & Technology magazine & the Queen Mary Press ReleaseThe Madrid Humanoids Workshop 2014

Deadline: October 22nd, 2014

Topics included but were not limited to:

- performing and artistic robots (including robotic systems that make music, recite poetry, perform comedy, dance, narrate, act, etc.)

- interactive robotic systems (including robotic systems that are involved in, explain, recite, or co-create art or performances)

- learning and problem solving (including robotic systems which find creative solutions to problems or a theories of such creative systems)

Audiences, Live! Understanding and augmenting audience dynamics at live events

When: Monday 9th June, 14.00 – 17.00

Where: Rich Mix, 35 – 47 Bethnal Green Road, London, E1 6LA

What makes live performance compelling? How does it differ from relays or recordings? This event explored the emergence of new techniques for capturing, understanding and instrumenting the dynamics of live audience interaction; both performer-audience and audience-audience.

The aim was to promote discussion of how digital technologies are transforming the experience and analysis of live events and was aimed at those creating or producing live events for audiences or consumers, and interested academics. Prof. Pat Healey, Dr. Colombine Gardair, Toby Harris and Kelomenis Katevas presented the audience interaction work of the Cognitive Science Group.

They demonstrated how technology can provide real-time information about people’s reactions to a live event and the new kinds of research on audience engagement that it makes possible. They also illustrated some ways in which these technologies can be used by the Creative and Cultural Industries to enhance the experience of live performance.

The evening concluded with open discussion and refreshments.

This event was delivered in partnership with Creativeworks London and Rich Mix.

Doctoral Colloquium on Miscommunication: 13th May 2014

Lock Keeper’s Graduate Centre, Queen Mary University of London, London E1 4PD, UK

Along with the 3rd International Workshop on Miscommunication we ran a doctoral colloquium. The doctoral colloquium was an informal space for PhD students to discuss the ideas they are currently pursuing, their methodologies and approaches.

If you or someone you know is working on miscommunication in their PhD work – please get in touch.

New Book

Magda Osman’s new book,

“Future-Minded: The Psychology of Agency and Control“,

is coming out next month! Pre-order it here!

Mind in Society Funding Sandpit

The Centre for Mind in Society will be holding an interdisciplinary funding sandpit aimed towards identifying and exploiting potential research collaborations between schools at QML.

What:

- presentations from the Research Office & Business Development Office at QMUL

- 1-minute speed presentations by academics

- identifying potential collaborations

- collaborators work together in groups on a project ideas and potential funders

- mini-presentations of the resulting project ideas

When: Wed 11th June, 2-6pm (coffee will be provided)

Where: The Royal Foundation of St Katherine, 2 Butcher Row, London E14 8DSWe envisage this being of particular relevance to academics and postdocs but any interested QML staff and students are welcome to join.

Public Debate: Who Is in Control?

A panel of experts from UCL, LSE and QMUL will be taking part in a Question Time public event hosted at Queen Mary University of London, on Tuesday, March 25th.

You can read more about it and book a place here.

In preparation for the event, we are collating questions on the topic of control, which will form part of the debate. If you wish, you can contribute a question to the debate – it will take no more than one minute.

The event will be followed by a drinks reception.

EEG Lab Launch

We are pleased to announce the launch of a new Electroencephalography (EEG) laboratory at Queen Mary, University of London. The new lab is the result of a collaboration between the School of Biological & Chemical Sciences (SBCS) and the School of Electronic Engineering and Computer Science (EECS) and was funded partly by an EPSRC equipment grant for Early Career Researchers. The lab will be run by Marcus Pearce (director) from EECS and Tiina Eilola (deputy director) from SBCS.

When: Tue 11th March 2014

Where: Informatics Hub on the 3rd floor of the Peter Landin (CS) Building.

Everyone is welcome. The launch will include a tour of the lab, which has the following facilities:

- 64-channel EGI dense-array EEG system for continuous surface recording of electrical brain activity;

- Physiology toolkit for recording respiration rate, heart rate, skin conductance and EMG;

- Soundproof booth (IAC) with mirrored window for auditory research;

- Speakers, monitors, button-box and other input devices cabled through soundproof ducts in the booth;

- Stimulus PC running e-prime, mirroring the participant’s display.

3rd International Workshop on Miscommunication

We are pleased to announce that QM’s CogSci group will host the 2014 International Workshop on Miscommunication. The workshop builds on two previous meetings at QMUL in 2006 and as part of the Cognitive Science conference in Stresa in 2005.

The workshop will bring together researchers from around the world to discuss the notion that processes of detecting and dealing with miscommunication are key to explaining how communication is possible in principle and how it is effected in practice.

More information is available on the workshop microsite.

WISE Award won by CogSci student Nela Brown

PhD candidate Nela Brown received the WISE (Women in Science and Engineering) Award in the Highly Commended WISE Leader category, in recognition of her work with G.Hack, WISE@QMUL and Flossie. The award was handed by Her Royal Highness The Princess Royal during the November 15th ceremony in London.

From Blickets to Synapses

New paper on causal inference using multiplicative STDP + plasticity rules, by Chrisantha Fernando.

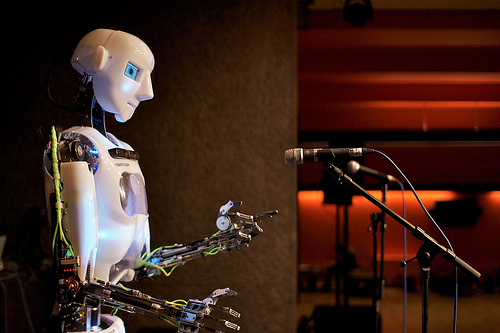

Comedy Lab: Human versus Robot

A Hack the Barbican Activity

7th and 8th of August. 6.00pm – 6:30 pm, Club Stage, Lower Ground, Barbican Centre

What makes a good perform ance? By pitting stand-up comics Tiernan Douieb and Andrew O’Neill against a life size robot in a battle for laughs, researchers at Queen Mary, University of London hope to find out more — and are inviting you along.

ance? By pitting stand-up comics Tiernan Douieb and Andrew O’Neill against a life size robot in a battle for laughs, researchers at Queen Mary, University of London hope to find out more — and are inviting you along.

A collaboration between the labs of Queen Mary’s Cognitive Science Research Group, Robothespian‘s creators Engineered Arts, and the open-access spaces of Hack The Barbican, the researchers are staging a stand-up gig where the headline act is a robot as a live experiment into performer-audience interaction. This research is part of work on audience interaction being pioneered by the Cognitive Science Group. It is looking at the ways in which performers and audiences interact with each other and how this affects the experience of ‘liveness’. The experiment with Robothespian is testing ideas about how comedians deliver their material to maximize comic effect. (For more on QMUL audience interaction activities, see the 2012 Ends of Audience workshop below).

Hack The Barbican // 5 – 31 AUGUST 2013 // ARTS + TECHNOLOGY + ENTREPRENEURSHIP

From Research to Design: Challenges of Qualitative Data Representation and Interpretation in HCI

BCS-HCI 2013 Workshop – deadline extended!

Dear HCI community,

We received a number of high quality submissions so far and would like to fill up the workshop capacity to ensure we get as diverse input as possible from researchers using different qualitative methods, different ways of representing qualitative data and different ways of translating those representations into the design of technologies. The workshop participants will work in groups using different data sets with the aim to assess the challenges of translating qualitative findings into design and, through collective discussion, propose a starting point for a framework of possible solutions.

Extended deadline submission requirements: Up to a maximum of 1 A4 page (500 words) to include method/s of qualitative analysis you have employed and list of issues you have encountered when moving from qualitative data to design.

Submission deadline: Friday 9th August 2013

Notification of acceptance: Friday 16th August 2013

Workshop date/time: Monday 9 September 2013, 14.00-17.30

Conference venue: Brunel University, London, UK.

Submission format: BCS ewics format to be submitted in pdf by email to Nela Brown.

Reviewing process: All submissions will be reviewed by at least two members of the programme committee. There will also be a meta review process to ensure consistency.

Workshop outcome: All accepted contributions will be archived online through the workshop website and the organizers will investigate the possibility of workshop contributions being extended to form a special issue of a relevant journal, such as the International Journal of Human-Computer Studies or Interacting with Computers.

Conference website: http://hci2013.bcs.org

Workshop website: http://www.eecs.qmul.ac.uk/~nelabrown/index.html

Workshop cost: £60*

*Authors of accepted submissions will be able to register only for the workshop. If you are planning to attend the full conference, please remember to register for the conference before 1 August to take advantage of the Early Bird prices!)

Workshop on Information Dynamics of Music

The one-day workshop celebrated the successful completion of our research project Information and Neural Dynamics in the Perception of Musical Structure, funded by the Engineering and Physical Science Research Council (EPSRC). The project included members at Queen Mary, University of London and Goldsmiths, University of London.

When: Thursday 21 March 2013, 9am – 5pm

Where: Room RHB 137a, Goldsmiths, University of London, London SE14 6NW, UK

The workshop provided a forum for dissemination and discussion of cutting edge research on dynamic predictive processing of musical structure in:

- probabilistic and information-theoretic models;

- cognitive, psychological and neural processing;

- musicological analysis.

Speakers included:

- Prof. Moshe Bar, Harvard Medical School

- Prof. David Feldman, College of the Atlantic, Maine

- Prof. Israel Nelkin, Hebrew University, Jerusalem

in addition to members of the IDyOM team. Attendance was free.

Ends of Audience Workshop

- Dates: May 30-31, 2012

- Location: Arts 2, Queen Mary University of London.

- Highlights: http://www.flickr.com/photos/matqmul/sets/72157630067232158

- Submissions: 300 word abstract by email before Jan 30th 2012, Midnight GMT.

People in audiences act: they talk, clap, heckle, sigh, inhale, exhale, rustle, twitch, tweet, dance, flirt, laugh, whisper, shuffle, cough… in doing so, they interact. There is a structure and dynamic to these responses which is central to the experience of being in a live audience. This workshop brought together researchers and professionals with interests in performance, interaction and technology who are working on understanding, instrumenting or experimenting with these dynamics, and the shifting ends of audience that they reveal.

We invited proposals for oral presentations, live demonstrations, installations and performance experiments that explore the nature of interaction in audiences. We especially welcomed interventions, participatory formats and creative approaches to convening workshop sessions. Topics included but were not restricted to:

the dynamics of collective and individual experiences of performance,the communicative organisation of audience-audience interaction,

non-verbal interaction and emotional contagion,

remote and co-present audience interactions,

the phenomenology of audience interaction,

changing historical and cultural understanding of the audience,

technologies and methods for sensing audience dynamics,

technologies and methods for enhancing and manipulating audience engagement.

Invited Speakers:

- Christoph Bregler (NYU Movement Lab)

- Louise Blackwell & Kate McGrath (Fuel)

- Usman Haque (Haque Design + Research Ltd, Connected Environments Ltd)

- Nicholas Ridout (Theatre and Performance Studies QMUL)